| Thank you for Signing Up |

YouTube recently rolled out a new feature called Thumbnail Testing, causing quite a buzz and excitement among the YouTube creator community. Initially, the community was filled with expectations, thinking this tool would revolutionize how viewers interact with YouTube thumbnails. However, now that the feature is live, it’s clear that it’s not quite what many had envisioned.

As a YouTuber with a monthly viewership of 1 to 1.5 million on my channel “LebenUSA“, I’ve personally found the Thumbnail Testing tool to be rather ineffective, and I’ve stopped using it.

In this article, I’ll explain why it might not be as helpful as it seems.

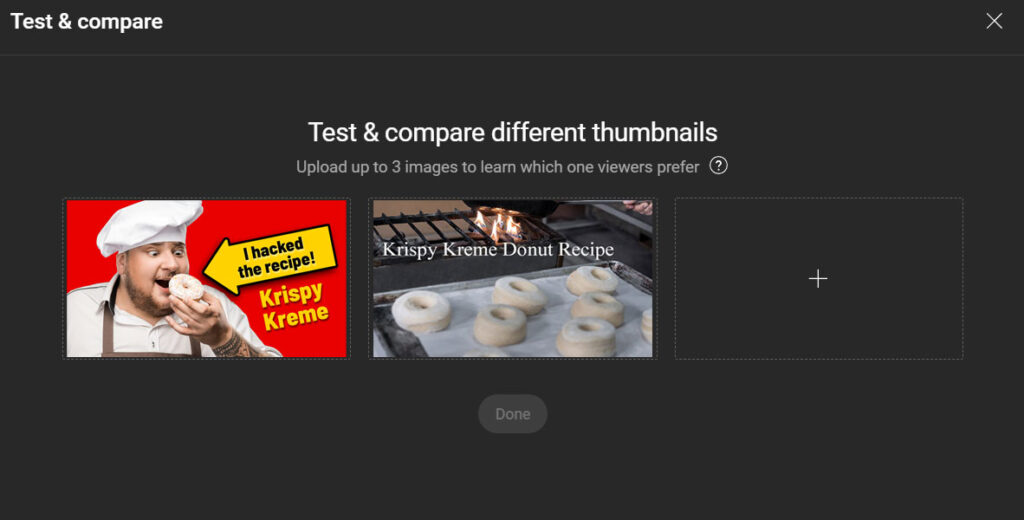

How Does It Work?

Creators can upload up to three different thumbnails for a single video. YouTube then randomly shows these thumbnails to different viewers and tracks which one performs best. After some time, creators can see the performance data and pick the most effective thumbnail, or they can wait 14 days for YouTube to automatically choose the one that got the most performance.

Expectations

This sounds like a perfect scenario for those looking to boost their thumbnail engagement, but there’s a catch. Despite what you might expect, Thumbnail Testing doesn’t actually show which thumbnail gets the most clicks. Many creators hoped this feature would help them learn which images draw viewers in, potentially guiding them to create highly engaging thumbnails. Unfortunately, YouTube avoids this direct approach to prevent creators from potentially slipping into clickbait practices.

The new feature isn’t quite the tool for mastering viewer engagement that many creators hoped for.

Reality

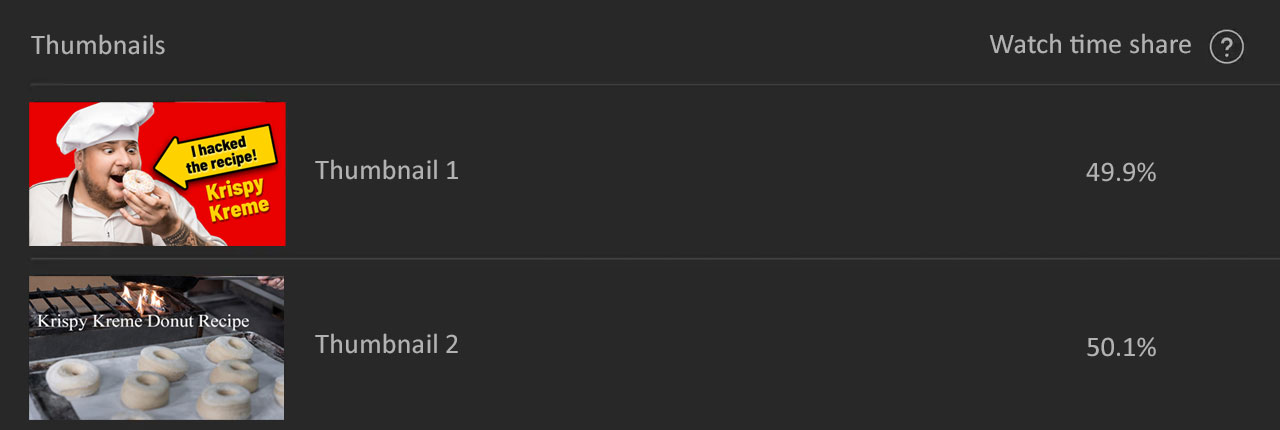

So, what does the YouTube’s Thumbnail Testing actually do? YouTube has rolled out a complex-sounding name metric called “Watch Time Share.”

Here’s how it works: If you’re running Thumbnail Testing with two thumbnails for a 10-minute video and viewers who click on Thumbnail A watch for only 2 minutes, while those who click on Thumbnail B watch for 8 minutes, YouTube will calculate the watch times accordingly. You’ll see that Thumbnail A accounted for 20% of the watch time and Thumbnail B for 80%. Clearly, in this scenario, Thumbnail B is the winner.

What this implies is that the “Watch Time Share” metric shows how long viewers watch your video after clicking on a thumbnail, which means measuring on viewer retention and not than click rates.

Why It’s Useless

Many prominent YouTubers, especially those with over 100K subscribers and monthly viewerships approaching or exceeding a million per month like me, have noticed a peculiar trend: regardless of the type of thumbnail uploaded, the Watch Time Share tends to distribute fairly evenly. This is also becoming apparent among smaller YouTubers. Essentially, if you upload two thumbnails, each often ends up with about 50% of the so-called “Watch Time Share”. With three thumbnails, the division usually appears to be roughly 33% each.

Here’s a straightforward explanation for this phenomenon: If your video is engaging, once viewers click on a thumbnail and start watching, they tend to view the video similarly to how others do, regardless of the thumbnail that initially attracted them. This indicates that the specific thumbnail clicked doesn’t significantly influence how long they watch the video; the content itself is important.

An Example

Let’s clarify this with an example. Suppose you upload a video titled “How to Make Krispy Kreme Donuts.” that is 10-minute in length. You decide to test two thumbnails:

Thumbnail A is eye-catching.

It features a chef holding a donut and claiming that you hacked the Krispy Kreme recipe.

Thumbnail B is understated.

It simply shows a mediocre image with a text overlay.

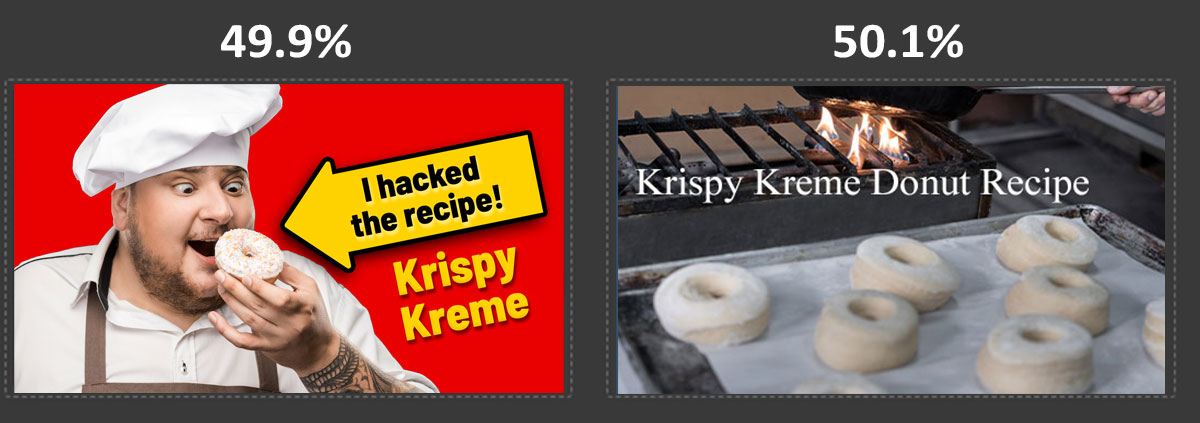

- Thumbnail A is visually striking and achieves an impressive Click-Through Rate (CTR) of 22%, meaning it successfully entices 22 out of every 100 viewers to click on it. Once they start watching the 10-minute video, they stick around for an average of 7 minutes. Clearly, Thumbnail A is effective at drawing viewers in.

- Thumbnail B, on the other hand, seems lackluster by comparison, with a mere 4% CTR. This means only 4 out of every 100 viewers choose to click on it. However, those who do click on Thumbnail B also watch the video for an average of 7 minutes — just like those who clicked on Thumbnail A.

From my own experience, I’ve noticed that once viewers click on a thumbnail, they tend to forget how engaging the thumbnail was and are focused on the content. If they like the video, they watch it longer; if not, they tune out. Their engagement is determined by the quality of the video, not the thumbnail that brought them there.

However, having a less engaging thumbnail does lead to fewer viewers initially, while more eye-catching thumbnails significantly increase visibility and initial clicks. As a creator, I would prefer a tool that tells me which thumbnail gets more clicks and not how long viewers watch after clicking. Unfortunately, the “Watch Time Share” metric, as it stands, doesn’t provide this and thus has no value for me.

Why Did YouTube Introduce the Thumbnail Testing Tool?

I suspect that this tool wasn’t established YouTubers. We know that the key to success on YouTube involves producing quality content and creating thumbnails that capture interest and curiosity without misleading viewers. Ideally, a tool that shows which thumbnails drive more clicks would benefit established creators, who aim to meet viewer expectations without resorting to clickbait.

However, YouTube’s goal with this tool seems to be educational, aimed at encouraging creators to focus more on video quality rather than on crafting deceptive clickbaits. When you look at platforms like TikTok, it’s evident that many creators lean heavily towards creating clickbait to attract views. The rampant use of clickbaits, spam, and scams is an unfortunate reality in social media, where the pressure to maximize viewer numbers often leads to shortcuts.

YouTube wants to steer clear of this approach. They understand that long-term success on their platform comes from fulfilling viewer expectations with substantive content. The Thumbnail Testing tool is particularly useful for identifying creators who use misleading thumbnails. If a thumbnail test shows a significant discrepancy in watch times—say, one thumbnail garners 80% watch time while another only 20% — it suggests that the less successful thumbnail may be misleading viewers.

Thus, the Thumbnail Testing tool isn’t really about A/B testing in the traditional sense, where performance is measured strictly through clicks or conversions. Instead, it’s a tool to reinforce YouTube’s philosophy: create honest, engaging thumbnails that accurately reflect the content.

Essentially, it’s an educational tool designed to promote integrity and discourage deceptive practices in thumbnail creation.

And it’s huge disappointment for those who were expecting a real A/B-Testing tool.

| Thank you for Signing Up |

by

by